Kant was the systematizer of Isaac Newton Physics’ implicit epistemology.

The immediate discovery of Non-Euclidean geometries around 1830 and later on – at the beginning of 20th century: but Riemann conference is earlier (1854)- the discovery of Special relativity, destroyed his project of interpreting Raum (i.e. Space) as an a priori intuition.

The death bell for Zeit (i.e. Time) as an a priori intuition was Cantor theory of transfinite numbers and actual infinity.

The Axiom of Choice made for a static concept of the infinite where the temporal succession of terms had no room anymore. Time had always been linked to arithmetic and the counting: Kant was no exception and Weiestrass in the definition of limit processes and Cantor das Kontinuum ruined that link.

A better idea could be today to consider the Eddington constants as the true a priori intuitions for the human species. In other words, it would be better to consider that the conditions that made possible the existence of self-reflecting life in the Cosmos (Barrow and Tipler “Anthropic Cosmological Principle”, whether weak or strong) are the real a priori conditions for our knowledge of the world. But this is another story, so another post.

Popper is believed to be the (equivalent) systematizer of the world coming out of the new Mathematics and Physics. I advance the idea that what Popper did was really to propose an Evolutionary Epistemology in the spirit of Konrad Lorentz – see his Kants Lehre (1941)– or J. Piaget, whereby the successive approximations place increasingly more stringent bounds on the adequacy of our understanding of the world versus the “Ding an sich“.

Science does not prove, it only gradually disproves previously held conceptions and ideas of the world.

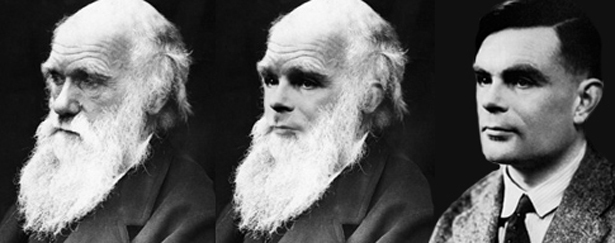

My point is the following: falsificationism is Evolutionary Epistemology and at its core there is the blind algorithm that creates “competence without comprehension”, see this article by Daniel Dennett advancing exactly same point w.r.t. Darwin and Turing. At this point, Lakatos is only Popper with changed Time Scale, the human instead of the natural time scale.

A further unification of all this would be saying that “to understand means to compress algorithmically”, a la Gregory Chaitin. A successfully compression is tantamount as understanding. Even if based on pure correlations. Again Big Data?

Leave a comment